Talking Shop: Assistant Professor Alberto Rodriguez

Robotic Dexterity for Warehouse Efficiency

Assistant Professor Alberto Rodriguez led a team in this past May’s Amazon Picking Challenge, winning second place out of 28 entrants for their robot. The challenge, whose judging panel included MechE alum Pete Wurman (SB ’87) – a senior executive at bot-building company Kiva Systems – tasked competitors with the job of developing a robot that has the dexterity to pick items out of bins and place them onto pallets or into boxes. Professor Rodriguez has been working on this type of problem for years, focusing his PhD at Carnegie Mellon University on robot manipulation and dexterity.

MC: What piqued your interest about competing in the Amazon Picking Challenge?

AR: The Picking Challenge is a very simplified version of the real problem, but the question of how to develop robots that can help manage warehouses, deliveries, and grocery shopping is a very hot topic in the field of robotics. Several decades ago, the main economic driver for dexterous robots was the need for manufacturing automation. More recently, the economic incentive was less clear. But now, with the spread of ecommerce, a need to manage warehouses more efficiently has come to the forefront, and there is a very clear economic advantage to solving the problem. It’s one that many researchers can connect with, so it’s a good place for collaboration. It’s also a great project for my students that will progress our research in the lab significantly.

What are some of the technical challenges in developing these types of bots?

There are quite a few challenges in developing a robust automated solution. It doesn’t sound like a complex problem, but there are many intricacies when you start looking into the specifics of the different ways in which an object or objects can be arranged in respect to each other and the constraints in the environment. The robots have to be able to pick up objects on shelves or inside bins where there is no clear access, not only in terms of perception but also in terms of the dexterity needed to get inside. These are objects that are clustered with others, so it’s challenging for a robot to understand exactly what the right strategy is to get in there and grab the right item without colliding with everything around it. There is a lot of complexity involved in that process, both in terms of dexterity and perception.

How significantly is your group focusing on artificial intelligence?

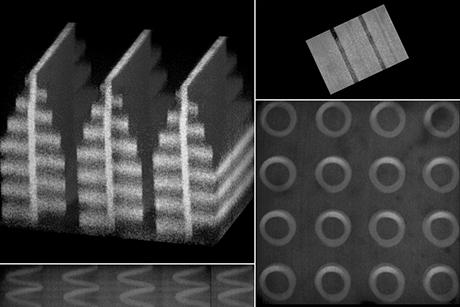

Our focus is more on the physical interaction of what I like to call “the last inch.” It is challenging to plan the motion of getting close to things, but there is yet another challenge robots face once they get there and need to make contact. The robot needs to know exactly what to do with its fingers and how to react to unexpected contacts or when something slips. That is “the last inch,” and it is the focus of my research. I look at it from several perspectives. One is design: How do you develop grippers that can account for the variability of the different shapes of the object, the different materials, or the different constraints in the environment? Another is perception: There is information that the robot should be able to recover from contact to help it make decisions, such as the weight and inertia of objects, its pose, or other mechanical properties such as friction. Another is control: Once the robot knows enough about itself and the environment around it, it needs to plan and execute a motion that will contact the environment, for example by pushing on or pulling from it.

What possible solutions have you chosen to pursue?

One idea we’re working on is to develop a robotic hand that pushes against the environment to manipulate an object. This means that the robot would use the environment as a fixture by pushing against it to obtain some reconfiguration of a grasped object, almost as if using the environment as an extra “finger.”

How would you use the environment in that way in a manufacturing environment?

In a manufacturing setting – on an assembly line, for example – there are parts coming in at a certain speed. They might come in on a conveyor belt, or in trays or bins, but either way the robot needs to grab one of those parts for assembly. But the constraints on how it can pick up the part often prevent it from picking it up at the right orientation. So it can pick it up, but now that it’s in the hand, the robot needs to do some reconfiguration before it can move it to the next stage. One of the classic ways of doing this reconfiguration is to have a fixture the robot uses to put the thing down and then re-grab it. The problem with that approach is that it tends to be slow. In assembly lines, companies want things that work every second, or every two seconds; that’s the throughput that they have. One way to make that process faster would be to replace the act of re-grasping (grab, reposition, re-grasp) with pushing against the environment. So the robot picks up a bolt and pushes it against the environment so that it is in the right orientation, and then screws it in.