A new model offers robots precise pick-and-place solutions

Pick-and-place machines are a type of automated equipment used to place objects into structured, organized locations. These machines are used for a variety of applications — from electronics assembly to packaging, bin picking, and even inspection — but many current pick-and-place solutions are limited. Current solutions lack “precise generalization,” or the ability to solve many tasks without compromising on accuracy.

“In industry, you often see that [manufacturers] end up with very tailored solutions to the particular problem that they have, so a lot of engineering and not so much flexibility in terms of the solution,” Maria Bauza Villalonga PhD ’22, a senior research scientist at Google DeepMind where she works on robotics and robotic manipulation. “SimPLE solves this problem and provides a solution to pick-and-place that is flexible and still provides the needed precision.”

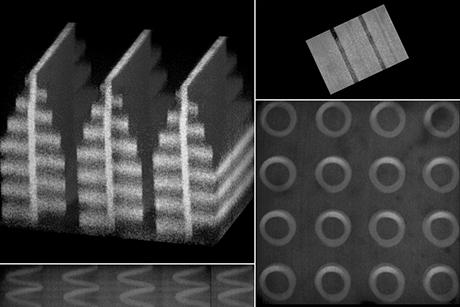

A new paper by MechE researchers published in the journal Science Robotics explores pick-and-place solutions with more precision. In precise pick-and-place, also known as kitting, the robot transforms an unstructured arrangement of objects into an organized arrangement. The approach, dubbed SimPLE (Simulation to Pick Localize and placE), learns to pick, regrasp and place objects using the object’s computer-aided design (CAD) model, and all without any prior experience or encounters with the specific objects.

“The promise of SimPLE is that we can solve many different tasks with the same hardware and software using simulation to learn models that adapt to each specific task,” says Alberto Rodriguez, an MIT visiting scientist who is a former member of the MechE faculty and now associate director of manipulation research for Boston Dynamics. SimPLE was developed by members of the Manipulation and Mechanisms Lab at MIT (MCube) under Rodriguez’ direction.

“In this work we show that it is possible to achieve the levels of positional accuracy that are required for many industrial pick and place tasks without any other specialization,” Rodriguez says.

Using a dual-arm robot equipped with visuotactile sensing, the SimPLE solution employs three main components: task-aware grasping, perception by sight and touch (visuotactile perception), and regrasp planning. Real observations are matched against a set of simulated observations through supervised learning so that a distribution of likely object poses can be estimated, and placement accomplished.

In experiments, SimPLE successfully demonstrated the ability to pick-and-place diverse objects spanning a wide range of shapes, achieving successful placements over 90 percent of the time for 6 objects, and over 80 percent of the time for 11 objects.

“There’s an intuitive understanding in the robotics community that vision and touch are both useful, but [until now] there haven’t been many systematic demonstrations of how it can be useful for complex robotics tasks,” says mechanical engineering doctoral student Antonia Delores Bronars SM ’22. Bronars, who is now working with Pulkit Agrawal, assistant professor in the department of Electrical Engineering and Computer Science (EECS), is continuing her PhD work investigating the incorporation of tactile capabilities into robotic systems.

“Most work on grasping ignores the downstream tasks,” says Matt Mason, chief scientist at Berkshire Grey and professor emeritus at Carnegie Mellon University who was not involved in the work. “This paper goes beyond the desire to mimic humans, and shows from a strictly functional viewpoint the utility of combining tactile sensing, vision, with two hands.”

Ken Goldberg, the William S. Floyd Jr. Distinguished Chair in Engineering at the University of California at Berkeley, who was also not involved in the study, says the robot manipulation methodology described in the paper offers a valuable alternative to the trend toward AI and machine learning methods.

“The authors combine well-founded geometric algorithms that can reliably achieve high-precision for a specific set of object shapes and demonstrate that this combination can significantly improve performance over AI methods,” says Goldberg, who is also co-founder and chief scientist for Ambi Robotics and Jacobi Robotics. “This can be immediately useful in industry and is an excellent example of what I call 'good old fashioned engineering' (GOFE).”

Bauza and Bronars say this work was informed by several generations of collaboration.

“In order to really demonstrate how vision and touch can be useful together, it’s necessary to build a full robotic system, which is something that’s very difficult to do as one person over a short horizon of time,” says Bronars. “Collaboration, with each other and with Nikhil [Chavan-Dafle PhD ‘20] and Yifan [Hou PhD ’21 CMU], and across many generations and labs really allowed us to build an end-to-end system.”